Summary: A zero-trust approach prevents data breaches by continuously verifying every single request, letting your team use AI safely without giving up control.

At this point in time, artificial intelligence is well past experimentation and is now being used on a daily basis by many teams. They use it to analyze data, assist employees, and automate tasks that once required manual work. As a result, AI systems are gaining access to more company resources, internal tools, and sensitive information.

That level of access raises important questions about control. Who can interact with an AI system? What data can it reach? And how do you prevent misuse when models connect to multiple services, users, and datasets?

These questions are pushing businesses to rethink how they approach access management, and zero trust is increasingly part of that conversation. Instead of assuming that systems or users inside the network are safe by default, it requires verification for each request.

In the sections ahead, we’ll look at where zero trust fits into AI security, the risks organizations should be aware of, and practical ways to apply zero-trust principles to AI environments.

Does zero trust actually mean anything for AI?

So the biggest problem with artificial intelligence right now isn’t even the tech itself, but rather that we’re treating it like a special guest that doesn’t have to follow the house rules. We’ve spent years tightening up who can see what, and then we plug in a generative AI tool that, by design, wants to absorb every bit of data it can find.

Why does this matter? The concept of zero trust means exactly what it sounds like: not trusting anything by default. For a long time, standard IT setups assumed that if a user or an app made it past the initial login, they were safe and could look around. With zero trust, you can’t make that assumption. It forces every person, device, and software integration to continuously prove their identity and justify why they need the specific data they’re asking for, request by request.

This is why a zero-trust security architecture is a highly effective approach. If you’re building your setup on zero-trust principles, you stop caring about whether a tool is “official” or not. You treat every single request from an AI agent or user as a potential risk and scrutinise it accordingly.

Instead of giving a tool wide-ranging permissions, you apply least privilege access, and if a predictive AI needs to look at Q3 sales figures, it gets that specific dataset and nothing else. No access to the internal communication channels, no peeking at the HR files, and no “roaming” permissions.

It’s all about moving away from this vague access management and actually using granular access controls. Even though it takes more work to configure initially, it’s better than finding out that your AI tools may have exposed internal strategy with a public LLM.

The risks of integrating AI into your network

And unfortunately, that exact scenario—accidentally leaking your internal strategy—is happening more often than companies want to admit. If you look at the recent numbers from IBM’s 2025 data breach report, 13% of organizations have already suffered a breach directly involving their AI models or applications. Even worse? Out of those AI-related breaches, 97% happened because the company lacked sufficient access controls.

When we talk about the specific threats a zero-trust architecture is built to stop, we’re usually looking at these everyday realities:

Shadow AI. This is arguably the most common struggle for IT right now. Employees just want to work faster, so they bypass official channels and paste sensitive client data into unapproved, public AI tools. Because the security team doesn’t even know these platforms are being used, there’s absolutely zero oversight. Your sensitive data is now sitting on a third-party server, and you have no way to pull it back.

Over-privileged AI agents. For example, if you build an internal bot to help your sales team draft emails and you don’t strictly enforce least privilege access, that bot might naturally gain the ability to read every single file the sales rep can open. That could easily include unreleased financial reports or private performance reviews. So, if that bot gets compromised, the attacker instantly gets a free pass to all of it.

Prompt injection. Bad actors can deliberately trick a public-facing or internal predictive AI by feeding it hidden, malicious instructions. By doing this, they manipulate the system into ignoring its own safety guidelines and forcing it to output confidential data it was originally designed to protect.

Data poisoning. If your company is training its own models, attackers can subtly alter the input data so the system learns the wrong rules and ultimately makes critically flawed business decisions.

When you look at this list, the common denominator is pretty obvious. Traditional setups blindly assume the software, or the person using it, will behave appropriately. Zero-trust security assumes they may not, and puts the necessary safeguards in place before any damage can occur.

Related articles

Anastasiya NovikavaJul 1, 20245 min read

Joanna KrysińskaOct 2, 20249 min read

How exactly does a zero-trust approach prevent AI threats?

So, if we accept that blindly trusting these tools is a setup for a data breach, the next logical step is figuring out how to enforce those precautions we just mentioned. Rather than hoping employees don’t paste client information into unapproved chatbots, zero-trust architecture removes the decision-making process from their hands entirely, by enforcing continuous verification.

Every single time one of your AI agents tries to read a database or pull a file, the system demands proof of identity and purpose all over again. Because of this relentless checking, the threat of over-privileged bots is mitigated. Even if a hacker manages to compromise a specific tool, they don’t get a free pass to explore the rest of your network.

Strict access controls keep that compromised AI confined to its own isolated workspace. If an email-drafting bot suddenly tries to access HR files it has no business seeing, the system recognizes the anomaly and instantly denies the request.

This same containment strategy is exactly how you survive external attacks like prompt injection. When you fundamentally treat the AI itself as an untrusted user, you limit the blast radius of any successful trick. A manipulated model might output a strange, hallucinated response to a user, but because zero-trust security has restricted its permissions, that model cannot execute malicious backend commands or siphon off your customer database.

How to apply zero trust for AI security

If the whole point is keeping your intellectual property safe without slowing down your team, how do you actually roll this out?

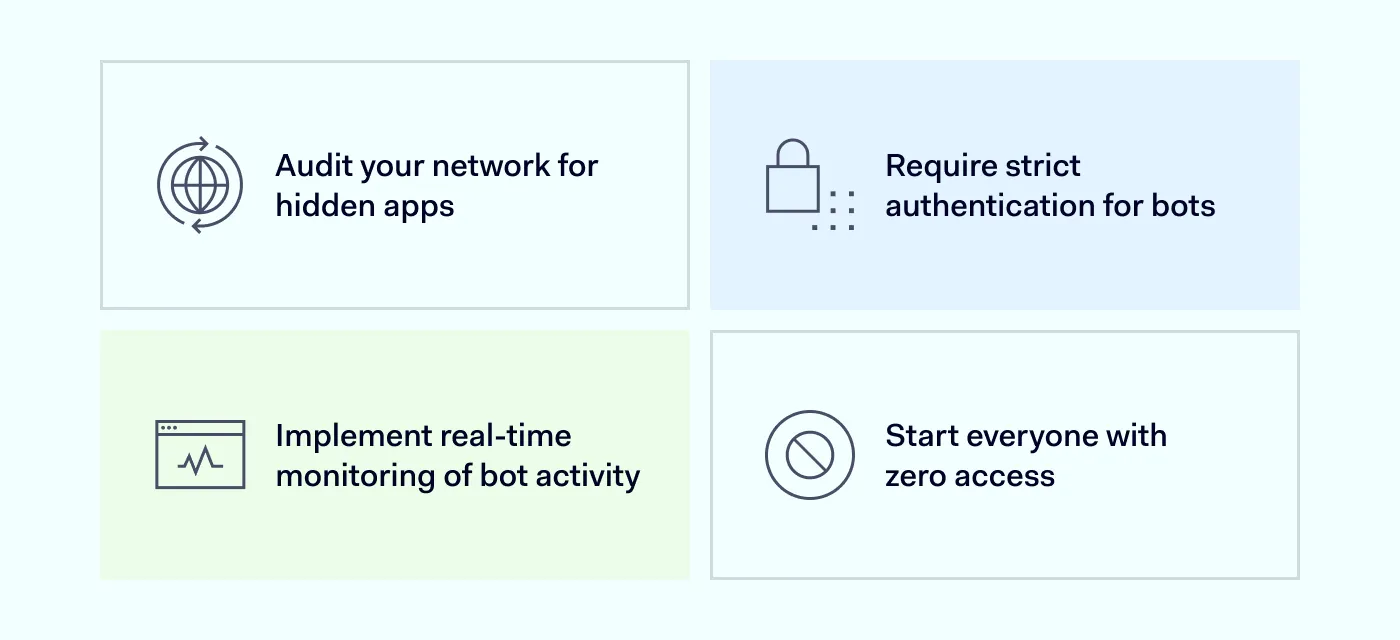

Audit your network for hidden apps

Because employees just want to get their work done faster, they often paste company data into public chatbots. Since you can’t secure a tool you don’t know about, your very first move has to be running a comprehensive network audit. Finding these unauthorized apps lets you make a clear decision on whether to officially approve them or block them entirely.

Require strict authentication for bots

Once you know exactly what is operating on your network, you need to stop treating those applications as trusted insiders. Just because a script or one of your internal AI agents is running on a company device doesn’t mean it should get a free pass. Instead, you must assign a strict digital identity to every single piece of software. Doing this forces the tool to constantly prove who it is.

Start everyone with zero access

And that brings us to the most critical, yet tedious, part of the process. Most modern software naturally wants to gather as much data as possible by default to make integration easier. To counter this, you have to intentionally break those open connections and start every user and tool with absolutely zero permissions. If a specific department genuinely needs a bot to analyze customer data, you grant that access manually.

Monitor connections continuously

Once an AI tool is allowed inside, you still have to watch exactly what it does with that access. That is because, in a zero-trust environment, a successful login at 9:00 AM doesn’t guarantee the software is still acting safely at 9:05 AM. So, to close that gap, the network is configured to re-verify the application every single time it makes a new request for data.

How NordLayer makes this work

Securing your network against AI risks doesn’t mean monitoring every user action. Instead, NordLayer enforces access controls at the network level. Using Zero Trust Network Access (ZTNA), you can segment your network so the everyday environments where people use AI are isolated from critical systems.

Because of this segmentation, a compromised tool has limited movement. If an AI extension attempts to access sensitive data, the request is denied based on policy. NordLayer also helps ensure device compliance. Before access is granted, Device Posture Monitoring checks if a device meets your security requirements.

As a result, if someone tries to access internal systems from a personal or unpatched device, they get blocked immediately, reducing your chances of data exposure. Your team wants to use AI to work faster, and they absolutely should. NordLayer provides the network controls so they can do it safely.

Aistė Medinė

Editor and Copywriter

An editor and writer who’s into way too many hobbies – cooking elaborate meals, watching old movies, and occasionally splattering paint on a canvas. Aistė's drawn to the creative side of cybercrime, especially the weirdly clever tricks scammers use to fool people. If it involves storytelling, mischief, or a bit of mystery, she’s probably interested.